The Information Commissioner's Office has an income of about £62m per year. I receive about 1000 spam emails a year - most are from anonymous foreign actors, but a significant proportion are from registered UK businesses who are flouting the rules. As a point of comparison, in the past year the ICO have taken "enforcement action" against just 2 organisations with respect to email marketing: Join the Triboo Limited, and Monetise Media Limited. In both cases, the organisations were sent "enforcement notices", which they ignored, and were then fined, which probably does a fair bit to demonstrate how much "enforcement notices" are worth.

The ICO is partly funded by fines, so there's incentive to go after one or two big cases (which make them money) and ignore the thousands of small cases (which cost them money).

Anyway, without further ado, here I present reason number #324873 why the ICO are a waste of money:

Some company called Autosuggest started spamming me. I won't give them the publicity by showing the email here, but it was badly formatted, contained my first name, my employer's name and information about the nature of my employer's business. There was no unsubscribe link or privacy information in the email.

The email was sent to my "corporate address", so probably wasn't in breach of PECR (although the definitions are rather convoluted and could be interpreted either way). But they've still processed my personal data, and that means they needed to comply with GDPR.

The first thing to note is that Article 14 of the GDPR requires them to have provided me with "privacy information", and they have not done so. Its common for spammers to include a link to that info in their emails, which does fulfil the requirements so long as the emails are sent within a month of obtaining the personal data, but in this case they didn't even do that.

So, back in February, I made a Subject Access Request, which asked for various pieces of information. The most obvious of these was "provide me with a copy of all data you hold which relates to me", but there were a few others such as asking to confirm who the data controller is, and records of processing activities. The "right of access" is provided by Article 15, and although that particular article doesn't mention time scales, Article 12(3) does. They are required by law to respond to my request within 1 month.

The month came and went with complete radio silence and after a second month I sent a chasing email which at least generated an automated confirmation of receipt that said it would be reviewed by a "consultant".

Needless to say, they didn't reply, so 2 months later (now 4 months since I made the original request) I raised a complaint with the ICO. Another 2 months went by and the ICO responded, upholding my complaint and instructing Autosuggest to respond to me within 14 days.

Thank

you for your email of 12 June 2023 regarding a data matter involving

Autosuggest. We have considered the information available in relation to

this complaint, and we are of the view that Autosuggest

has not complied with their Data Protection obligations. This is

because you did not receive a response to your subject access request

within one calendar month and were sent unsolicited marketing

communication.

We have written to Autosuggest about their

information rights practices. We requested that the organisation revisit

the way your SAR has been handled, provide you with all of the

information

you are entitled to as soon as possible or within 14 calendar days, and

also stop sending you unsolicited marketing emails.

Should Autosuggest not provide you with the

personal data to which you are entitled, you have the right to approach

the courts for an order for your data to be released. Legal action of

this

nature is not something that the ICO can assist with, and we would

recommend that you seek some independent legal advice before taking this

step.

We will not be taking further action on this case

at this time. Furthermore, please note that the organisation is not

located in the U.K., so the ICO’s jurisdiction does not cover the

organisation’s

location. However, we hope the organisation adheres to our guidance,

upholds best practises, and respects individuals privacy rights.

Please be aware that the ICO cannot award

compensation, nor can we advise if it should be awarded. It is for the

organisations to decide if compensation is appropriate on a case-by-case

basis,

or the individual may go to court to claim compensation for damage or

distress caused by any organisation if they have breached the Data

Protection Act.

Thank you for bringing this matter to our attention.

I note that it does point out that Autosuggest aren't based in the UK. However, they are marketing to UK customers, using the data of UK individuals, so GDPR's extraterritorial reach does apply. Also, I've had similar experiences with the ICO when dealing with UK companies, and a quick comparison between the number of data protection breaches, and the number of enforcement actions they have taken does quite a good job of demonstrating that they just don't enforce, even where they can.

Anyway, great - the ICO have ordered them to provide "all of the information" within 14 days, so they'll do that, right?

And indeed, they did send a response.

Thank you for contacting us.

Apologies for our late reply.

We

hereby would like to inform you that regarding your personal data we

have only stored your first name, last name and e-mail address in our

database.

Also, we have removed your e-mail address from our

marketing communication as per your wish, this has already happened

previously after we have received your e-mail.

In case of any further questions, please feel free to contact us.

Hmm... not exactly comprehensive is it. It says what types of information they hold, but not what the information actually is so no way to check if its accurate. Also, their spam included details about my employer, but they haven't mentioned that at all. And most of all, I asked several other questions, none of which they have answered. But they do say I can contact them if there are any further questions, so I did that the next day and received an auto response saying my email had been received.

2 months later (now 8 months since my original request), still no response, so back to the ICO I go. I asked them to re-open the case since Autosuggest had only provided a minimal response, which was obviously short of the "all of the information" that the ICO had told them to send. I attached copies of the emails, etc.

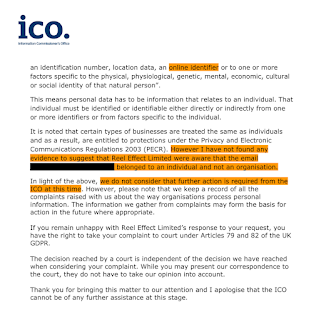

Now, I'm going to look at what the ICO said in their reply in some detail:

Thank you for your email of 16 October 2023 regarding your data concern that involves Autosuggest. Please

note that Autosuggest have informed

the ICO that they have supplied all the information they hold on their

system concerning you. They have ensured that the information provided

is complete and comprehensive.

Ok... but I had sent both my Subject Access Request, and Autosuggest's response to the ICO, so the ICO can clearly see that it is not "comprehensive". So why have they presented Autosuggest's statement as-is and not acknowledged that Autosuggest have lied to them?

If you believe that there may be other

information missing or have concerns about the completeness of the

information provided, it is recommended that you raise this specific

concern

directly with Autosuggest, giving them an opportunity to address it. By

engaging with the organisation first, you can allow them time to

respond and resolve any potential issues.

Right, but again, they can see from the evidence I sent them that I did raise this specific concern with Autosuggest, and they didn't reply. So why are the ICO telling me to do something that I have already done and didn't work?

The ICO expects organisations to genuinely

address the concerns raised by individuals and take appropriate action

to resolve them.

Its all very well to outline these expectations, but this organisation clearly isn't meeting them so why are the ICO communicating those expectations to me instead of penalising Autosuggest for not meeting them?

Therefore, it is advisable to follow the steps outlined

above before considering involvement with the ICO.

But the ICO can see, from the information I've given them, that I did already follow the steps outlined above before getting them involved, so why are they telling me this?

Should you decide to pursue the matter with

Autosuggest, I recommend allowing them a reasonable timeframe like a

month, to respond to your concern.

Well! A month, you say? Did you notice that I had given them two?

This will facilitate a constructive

dialogue between you and the organisation focused on addressing your

data protection rights and rectifying any potential shortcomings.

Did they really mean "constructive dialogue", or maybe they meant to say "constructive monologue" since Autosuggest won't reply to any of my communications? Maybe the ICO should explain how a monologue can be constructive?

Please remember that our role at the ICO is

to monitor compliance with data protection regulations and ensure that

organisations fulfil their obligations.

But when they are faced with an organisation that is not fulfilling their obligations, what are the ICO doing to "ensure" that they do? In my experience, except for a handful of high profile headline cases, the answer is "nothing".

We encourage you to approach

Autosuggest first in order to resolve any outstanding issues you may

have.

Again, why are the ICO reiterating this when they can clearly see I already "approached" Autosuggest first and got nowhere?

Thank you for your understanding, and we hope for a swift resolution to your concern with Autosuggest.

Well, whoop-di-do, thank you for all your help ICO.

Anyway, I replied to the ICO pointing all of this out and I got a rather blunt reply that just referred me back to the email above, without addressing the fact that all of the information they had provided was useless.

Oh, and they also told me they would ignore any further emails I sent the ICO regarding this... so.. yay?

So this is essentially the state of play in the UK. There is (for now) good data protection legislation, but practically no enforcement. So unless you are concerned about reputational damage to your company, you can completely ignore it with very little risk of penalty. If you're a trashy little spammer, or data broker, why would you comply with the law, since your reputation is already trash anyway?